Introduction

A couple of weeks ago I started upgrading an environment that is configured with VMware vSAN. This on itself isn’t a difficult task nowadays. It does however become somewhat problematic if you receive all sorts of errors on the vSAN cluster after upgrading a couple of ESXi hosts, that don’t seem to make any sense. In this blogpost I will explain what issues we received and how we fixed these.

For reference below the environment specifics:

- Original vSphere ESXi hosts 6.7 U2

- Original vCenter Server 6.7 U2

- New vSphere ESXi host 6.7 U3 Build 16316930

- New vCenter Server 6.7 U3 Build 16616668

Troubleshooting

So a few weeks before I started to work on this environment I also upgraded a vSAN environment to the latest and greatest vSphere ESXi builds and this all went fine without any issues. Since this environment had the same underlying hardware, I re-used all my vSphere Update Manager (VUM) baselines and did my thing and started upgrading!

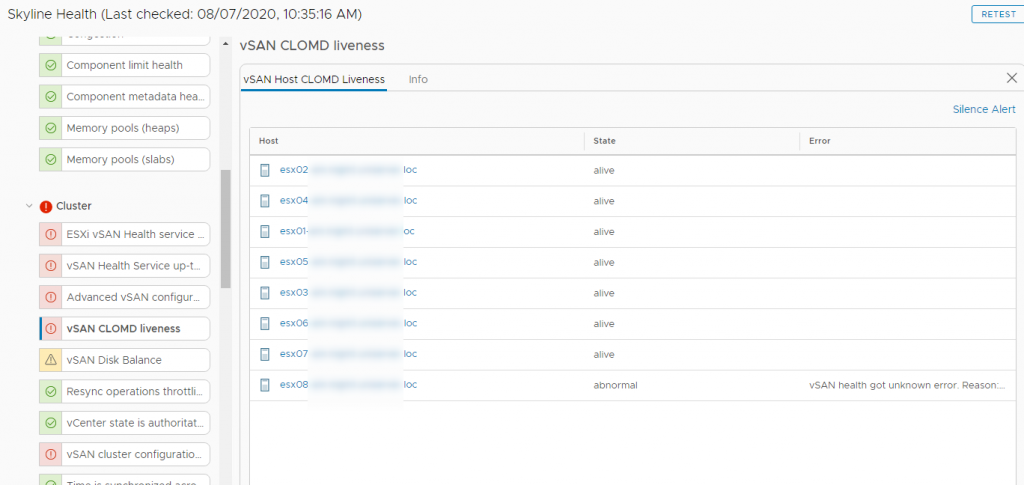

After upgrading the first host the vSAN cluster had some issues. These can be seen below:

Physical disk health retrieval issues: vSAN health got unknown error. Reason: None.

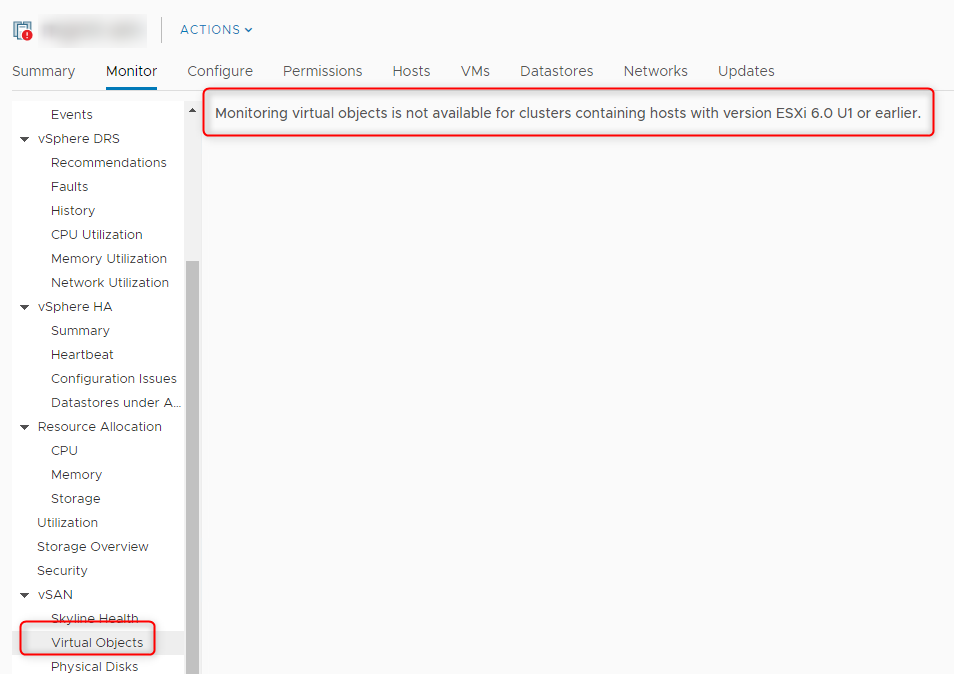

Virtual Objects view under vSAN: Monitoring virtual objects is not available for clusters containing hosts with version ESXi 6.0 U1 or earlier.

This one was especially weird because the complete environent was build on 6.7, it’s never even seen a 6.0 U1 or earlier vSphere version.

vSAN CLOMD liveness: State abnormal vSAN health got unknown error. Reason: None.

Once we saw these we started troubleshooting the environment. We checked the CLOMD services, rebooted the host, re-checked the VUM updates, restarted services and checked online resources. We triple checked the vSAN Health Service and the vSAN functionality on the ESXi host, but in the end this all worked without any noticeable issues. Eventually nothing we tried helped, until I realised something…

The fix

I realised that we started upgrading the environment with the ESXi hosts, which I also did on the other environment I upgraded earlier. One thing that I forgot however, is that this vCenter Server was not 6.7 U3 yet, and we were updating the hosts to 6.7 U3. This on it’s own is not a problem at all, you can see this by looking at the below VMware Interoperability Matrix:

It’s been a while now that you can have small discrepancy between the vCenter Server and ESXi hosts, this was a lot stricter in the old days. What does not work though is doing this on an environment that has VMware vSAN enabled… VMware vSAN has it’s own place within the VMware Interoperability Matrix, which you can see in the below figure:

And as you can see, this is not supported. At this point I figured the issues we were having had to be because of the fact that the vCenter Server version was not interoperable with the vSAN version. After this we started updating the vCenter Server to version 6.7 U3 Build 16616668. I made a small guide on how to do this before, you should check out the following blogpost if you are interested.

Once we upgraded the vCenter Server the vSAN Health Service reported that everything was happy and green again! All of the errors were resolved.

Conclusion

So there you have it guys. When you are upgrading a vSAN enabled vSphere Cluster you should always be aware of the fact that vSAN has it’s own place on the VMware Compatibility Matrix! Make sure to stick to it and read the Matrix carefully.

Another important thing to remember is that there is an update sequence which you should follow to not run into issues like those we encountered. The sequence is there for a reason. In this sequence, which can be found on this link, the default starting point would be vRealize Automation, vRealize Orchestrator, vRealize Operations in most environments. The vCenter Server, ESXi and vSAN would be the last couple or parts that would have to be upgraded.

While we are at it, you should also be aware of the VMware vSphere Back-in-time release upgrade restrictions. What these restrictions mean is that you cannot upgrade to a newer vSphere build when the release of this build was “back-in”time”. I will explain this with the below example:

Let’s say you are running vSphere ESXi 6.5 U3 EP 20 (build 06/30/2020) and you want to upgrade to 6.7 U1 (build 10/16/2018). You would think that this is possible because the 6.7 U1 version is higher right? Well you are incorrect in that case. Since the vSphere 6.5 release is newer than the 6.7 U1 release you will not be able to upgrade to that because that release is considered “back-in-time”. When looking at the vSphere ESXi build list, you will see you will have to update to at least 6.7 U3 EP 14 (build 04/07/2020).

5 Comments

Mark · August 31, 2020 at 2:51 am

VMware has a good KB on update order of operations for those who are interested! I think it’s still fairly accurate.

6.7 related:

https://kb.vmware.com/s/article/53710

Surprising to me, vcenter isn’t anywhere near the front of the line. Maybe unsurprisingly, hosts and vsan are basically last. Still interesting to see all the different products and where they line up!

Bryan van Eeden · September 3, 2020 at 7:49 am

Correct! That’s why I mentioned this in my conclusion. You should follow that link if you are upgrading an environment which uses a couple of vSphere products!

Uki · August 31, 2020 at 8:57 am

I never started an upgrade from ESXi hosts. Regardless of that if it’s vSAN or not. I always start with vCenter.

Bryan van Eeden · September 3, 2020 at 7:50 am

Well that would be good enough if you only had a VMware vCenter Server, ESXi hosts and vSAN. If you have anything else you should follow the Update sequence KB I mentioned in my conclusion (or the one that Mark) mentioned in the comments.

Mike Anderson · April 24, 2026 at 5:11 pm

Good takeaway about always checking the compatibility matrix before upgrading. It’s a small step that can prevent big issues later.