Introduction

In todays big webcast VMware made some interesting announcements for all VMware vSphere, vSAN and VMware Cloud Foundation (VCF) enthusiasts. I’ve been working with most new additions for a couple of weeks now and today the next major update in the vSphere portfolio was publicly announced with VMware vSphere 7 Update 1, VMware vSAN 7 Update 1 and VCF 4.1! It will take another month or so before we can download the bits, which would be on the 30th October 2020.

These new updates are, based on my opinion, actually major upgrades to the products. VMware really stepped up their game and made sure they listed to all the feedback they received on their wonderful vSphere 7 release with Tanzu in combination with VCF.

In this blogpost I will guide you through the most important updates to these products, and just like other blogposts I will link the Release Notes in them once they are publicly available!

vSphere 7 Update 1

vSphere with Tanzu

Not only did VMware add a couple of nice features to the product with this version, they also included “VMware vSphere with Tanzu”! If you are familiar with my previous post on vSphere 7 you might be aware that if you wanted to use the VMware Tanzu products you could only do this on a VCF 4.0 environment. Which meant you would also had to use a boatload of other products and NSX-T. In this release VMware removed the “restriction” and made sure that we can use the Tanzu products on a regular brown-field vSphere environment. This way we can now drop-in enterprise grade Kubernetes right into our existing brown-field infrastructure. Because of this we are now free to pick our choice in regards of network, storage and compute for our environments, which brings in a lot of flexibility in delivering a developer-ready infrastructure.

If you look at this from an overal SDDC perspective you can find yourself liking the below architecture that goes with this:

So I hear everybody thinking to themselves, how does this work? Well, it’s actually quite easy. Have a look at the below figure:

As you can see above, Tanzu in vSphere will integrate and make use of the Virtual Distributed Switch. This also means that we are no longer required to use NSX-T to make use of Tanzu. You actually only need two (vlan backed) portgroups that the Tanzu software will make use of, this would be the Management portgroup on which, you guessed it, management network traffic will flow to manage all the K8S bits. The second portgroup is the Workload portgroup on which the workload network traffic will flow coming from the containers. The third portgroup was not necessarily needed as far as I understood, but it can be used to guide Frontend traffic.

All of these enhancements and additions to the product allow you to deploy and use vSphere with Tanzu in under an hour! Well this was about time if you ask me!

vSphere Lifecycle Manager (vLCM) enhancements

In vSphere 7 VMware changed how we can now update our vSphere clusters with the release of vSphere Lifecycle Manager (vLCM). vSphere Update Manager will be replaced by this in the end, but for now we can still use the legacy VUM services if we would like to. This new service allows us to use a desired state image across the cluster, use RESTful API’s to update the clusters and install Vendor specific Add-Ons and firmware right from the vSphere Client. If you want to know more on vLCM I suggest you have a look at this blogpost!

With the release of vSphere 7 Update 1 we can now use vLCM to update our NSX-T environment. It will probably won’t be ready to use right at the release of this update because it also requires a new update from NSX-T which isn’t announced yet. But once this is done you can now do the following with NSX-T while leveraging vLCM:

- Installation of NSX-T components

- Upgrade of NSX-T components

- Un-Installation of NSX-T components

- Add/Remove a host to a NSX-T cluster

- NSX Manager will update the Transport Node Profile (TNP) during this action and vLCM will install the NSX-T bits to the ESXi hosts.

- Move hosts to a vLCM enabled cluster

vLCM will also inform the NSX Manager if there is a drift in configuration in regards to the NSX-T components. In addition to this NSX Manager also has the capabilities to automatically resolve these drift issues.

vSphere Scalability

With every new release we also get a new list of supported maximums in a vSphere environment. This is not different in this release, VMware increased the following maximums:

| Compute Resource | vSphere 7 | vSphere 7 Update 1 |

|---|---|---|

| vCPU per VM | 256 | 768 |

| Memory per VM | 6 TB | 24 TB |

| Memory per host | 16 TB | 24 TB |

| Hosts per cluster | 64 | 96* |

| VMs per cluster | 6.400 | 10.000 |

These new maximums do require VM Hardware version 18. Next to this the 96 hosts in a cluster limit is not for vSAN enabled clusters, only for non-vSAN clusters. The vSAN cluster limit is still 64 nodes.

EVC for Graphics

Another nice addition to this release is the fact that we can now use EVC for Graphics. This means that we can now work seamlessly across various generations of hardware while consuming a consistent set of features, just like with CPU EVC. This also support vMotion and is initially supported for a baseline graphics mode (vSGA), and is available on a per-vm basis. This setting is configured on the cluster level like the normal EVC is.

VDDK SDK Improvements

This time around there are also some small improvements to the VDDK SDK. These are the most important changes:

- vCenter Server 7 Update 1 uses the hostd process instead of the vpxa process to manage NDB connections. This does not change the maximum of 50 parallel backups.

- We can now use NIOC to prioritize backup traffic.

- Backup jobs now get moved to another host in the cluster when the original host goes into maintenance mode.

vSphere Ideas

The last enhancement in the vSphere 7 Update 1 release is the addition of a Feedback submission mechanism right into the vSphere Client. I’ve seen VMware listening to more and more feedback from their customers and the community for a while now. The process to provide this feedback was tedious and so they improved on this and added the submission of feedback right into the vSphere Client.

vSAN 7 Update 1

Since VMware released vSAN 7 we’ve gained so many new features like integration with Lifecycle Management (vLCM), vSAN Native File Services, even better Cloud Native Storage, new vSAN metrics, awareness of vSphere Replication in conjunction with vSAN and NVMe hotplug support.

In this release we’ve gained even more. And like I said before at the start of this blogpost, I think Update 1 is a major upgrade to the product. And I will tell you why in the following couple of paragraphs.

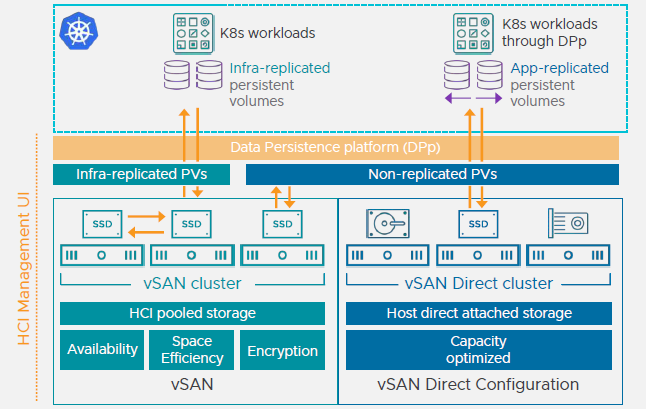

VMware vSAN Data Persistence platform (DPp)

This is something completely new in VMware vSAN. With VMware vSAN DPp we now have a framework with which we can now integrate stateful applications right onto vSAN. This further enhances the enhanced Cloud Native Storage (CNS) capabilities of the product. This does require a third party integration. Specific partners that are able to work with this framework will be announced soon. It is available with VCF with Tanzu and on vSAN Enterprise. So make sure you have the correct license. In addition to this we can now also use vVol’s to provision the Cloud Native Storage volumes with.

vSAN Direct Configuration

Another new addition to the product is that there now is a vSAN configuration called vSAN Direct. With vSAN Direct we can deliver storage from storage optimized and customized vSAN ReadyNodes right inside the vSAN environment. The difference with a regular vSAN host is that the regular vSAN host adds its storage to a pool of storage that belongs to the cluster. The vSAN Direct host has direct attached storage which is provided to the cluster without adding it to the cluster wide storage pool.

The main usecase for this type of configuration is to provide a way to create highly optimized storage nodes that deliver the volumes to shared nothing applications. You can see how this works in the following figure:

Unfortunately it seems that this is only available as a part of the previously mentioned vSAN Datapersistence platform.

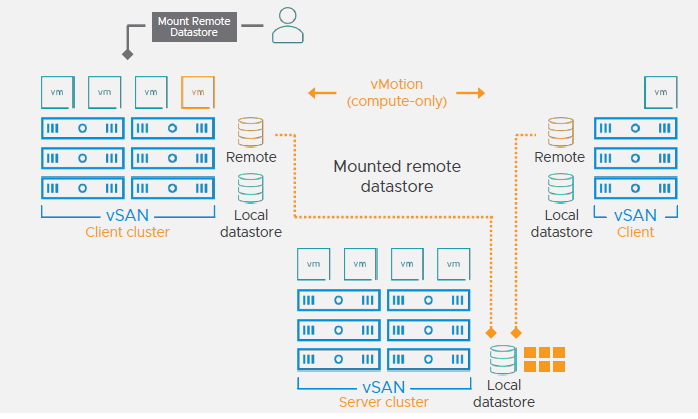

VMware vSAN HCI Mesh

The time has come that VMware vSAN is now able to deliver us the new “standard”, as far as I’m concerned, in HCI world. It’s now possible to create a (semi) disaggregated HCI solution within your environment. Why semi? Well because it’s not fully possible to scale independently on a storage or compute level. It’s not possible to add storage-only or compute-only nodes to the vSAN cluster. But what we can do is that we can “borrow” storage from another vSAN cluster that is currently not being used.

Let’s say we have three vSAN clusters, and within each vSAN cluster we have a couple of hosts.. Each one of them is working independently from the other and running a multitude of workloads. For argument’s sake let’s say that one of the vSAN clusters is full and there is no available capacity left to store new virtual machines. What VMware vSAN HCI Mesh allows us to do is that we can borrow storage capacity from one of the two other available vSAN clusters. Think of this as a remote datastore. This remote datastore can be exported and mounted within vCenter on a vSAN cluster. Once the datastore is mounted, you can use it like any other datastore.

This method is actually really cool. What this also allows us is the following scenario: Let’s suppose we remotely mount a datastore from vSAN cluster 2 to vSAN cluster 1 and 3. When we deploy a virtual machine in vSAN cluster 1 on the remote datastore from vSAN cluster 2, we can use a single compute only vMotion to migrate the VM to vSAN cluster 3. Since the VM is already on the same datastore, the only thing that is different is de compute node it’s running on. I think the following figure displays this process neatly.

This all works with the vSAN’s native protocol for efficiency and simplicity. This feature adds some layer of flexibility to the product. I won’t go as far as saying it’s now a completely disaggregated HCI solution, but it’s a start that’s for sure! I can definitely see some use cases here on environments that you don’t want to buy more storage, but you temporary need storage te deploy virtual machines. I can even see this becoming a migration/onboarding feature depending on the requirements, which have not yet been discussed. I do however know that this does not (yet) work with VCF!

vSAN Over-the-Wire encryption

The time has come that vSAN finally supports Data-in-Transit encryption. Since vSAN 6.6 we had the choice to enable encryption on the cluster to configure Data-at-Rest encryption. This actually worked very nice and vSAN performed a rolling disk group reformat to enable this cluster wide. Now vSAN has become even more secure with the addition of Data-in-Transit encryption. This means that data will also be encrypted from the point where it is created (inside the VM) until it is offloaded to the disk(s). Once the data is on the disk the Data-at-Rest encryption kicks in (if enabled). This way there is no possiblity that the data can be intercepted and used.

Data-in-Transit encryption can be enabled independently from the Data-at-Rest encryption or it can be used together. It uses the same FIPS 140-2 validated VMware VMkernel Cryptograhic module as before. It also doesn’t just encrypt the data in flight, but also encrypts the meta data. And the best thing is that we don’t need an external KMS server to let this work. VMware build a way with which the keys are managed internally in the system. I am wondering how this will go troubleshooting/restoring wise since we don’t have a KMS server. But I think we will see more of this soon once the release notes have been made public and more documentation is available.

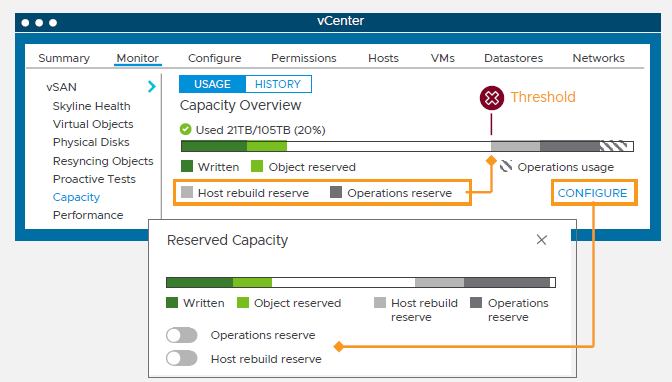

Less required free capacity and compression-only mode

With previous version of vSAN it was required to have at least 25-30% of free storage capacity available within the cluster. This 30% was needed for failures and transient operations such as creating new components, host evacuations and rebalancing or repairs. With this release VMware has improved the efficiency and made this fixed percentage a variable percentage based upon the number of hosts you have in the vSAN cluster.

I’m not sure how this works or what the formula is, but the 30% has been lowered to the following numbers, depending on how large the cluster is:

- 12 node cluster = ~18% free space

- 24 node cluster = ~14% free space

- 48 node cluster = ~12% free space

Really useful for the larger environments. I’ll update the post once I know what the formula is and if this is also for smaller clusters. With this release the “free space” number is also divided in two categories:

- Operational reserve free space, for the transient operations I mentioned before.

- Host rebuild reserve free space, for rebuilding components.

Both of these categories are also visible through the Capacity Overview page in the vSAN Cluster. This way you have granular overview the free space component in the cluster. A great addition that gives us more insight into the capacity requirements.

Something that’s also new is that we can now choose if we want Compression AND Deduplication enabled, or just Compression without Deduplication. Why would one do this? Well if you have a certain workload that simply isn’t dedupable (such as huge databases with unique data) the deduplication is just in the way of getting more performance because it uses CPU cycles that could be used elsewhere.

With this release we can just use a Compression only mode that actually really helps. Because we don’t need to do deduplication anymore we can destage the IO from the cache disk to the capacity layer in parallel to gain an even higher throughput!

Witness host consolidation

This new feature might just save everybody quite a few bucks! Where you would previously had to deploy a (albeit Tiny) vSAN Witness Appliance for each vSAN 2-Node deployment, you can now use one vSAN Witness Appliance for up to 64 2-Node vSAN clusters! Sizing details were not shared with me, but I am assuming here that this will save us at least half of the resources that we would’ve needed before!

This also comes with a new UI so that we can easily manage all of these remote clusters in the single witness appliance!

Enhanced durability during maintenance mode

During a maintenance window with the maintenance mode option “Ensure Accessibility” the vSAN cluster ensures that objects remain accessible even if some components might be missing because of the host being rebooted and such. This mode is the most common maintenance mode because it limits the resyncing of components across the cluster.

In a scenario where you have a FTT=1 RAID=1 VM and are doing maintenance on a vSAN cluster it might be possible that another host will crash. While this could potentially mean downtime for a virtual machine if all components are now missing, it might also mean partial dataloss for the last couple of writes to disk.

VMware has implemented an enhancement to this mechanism that prevents this. In this version when doing maintenance and if you would lose the second host in the cluster, it captured the latest writes to an additional third host. Once the 2nd failed host comes back online it quickly merges these writes to the stale components. This isn’t a rebuild for the entire stripe, but rather a quick resync/catchup mechanism so that the last writes don’t get lost. Pretty neat if you ask me. The below figure explains this a little better than text.

Quicker vSAN Host reboots

Have you ever have the chance to witness an ESXi host reboot where vSAN was enabled in the cluster? Well if you have and you’ve looked on the console you might have witnessed that it can sometimes take ages before the host is rebooted again. This is because the host has to process all log entries and generate all required (new) metadata tables in memory.

With this release VMware tried to make this process quicker by introducing a quiesce mechanism that snapshots the in-memory metadata tables, places a copy of it on the vSAN Cache disk in the host and restores this snapshot once the host comes back online. This mechanism can and will be used on all graceful host restarts. Makes sense this when a host crashes it won’t have time to create a snapshot. If your host cannot use this new method the previous method will be used.

Routed vSAN Topology enhancement

This one is actually a welcome enhancement of the product. In previous vSAN versions if we wanted to to create a Routed vSAN Topology we would need to add static routes from the CLI inside the ESXi host so that the vSAN vmkernel adapter knows where to send its traffic to so that it gets routed to the other environment. In vSAN 7 Update 1 you can now simply add or change the gateway for the vSAN vmkernel through the vSphere Client.

This effectively eliminates CLI operations from the ESXi hosts and provides an improved visibility of the currently used settings in each ESXi host.

vLCM enhancement

Like I mentioned earlier in the blogpost with vLCM you can also update vSAN environments to maintain your desired state cluster image on the environment. With vLCM you can also update hardware firmware versions. In the previous release only HPE and Dell were supported, now Lenovo is added to the list of compatible hardware vendors. Next to this vLCM is now aware of vSAN Fault domains and stretched clusters!

Secure erase decomissioned storage

Ever had to replace or decomission disks that were previously used by vSAN? Well you might like it that it’s now possible to secure erase a disk through PowerCLI with a simple “Wipe-Disk” command. The secure erasing of the disk is fully compliant with NIST standards. At the moment this only works on NVMe, SATA and SAS flash based devices from HPE and Dell. No other vendor is currently supported.

IOInsight build into vSAN

This release contains something that I wish I had before. Can you remember the IO Insight fling that a lot of us have used for ages to analyze the VM’s IO behaviour and patterns? Well I have good news for you. Starting with vSAN 7 Update 1 this fling is more or less build into the product and in the vSphere Client. We can now easily monitor the most common I/O metrics on vSAN workloads such as Sequential/Random IO Ratio’s, Aligned/Unaligned IO blocks, I/O Size distribution and I/O latency distribution!

There are also some enhancements to the vSAN Performance tab in the vSphere Client. We can now select multiple VMs and view performance information in one graph in a single location. This makes comparing VM workloads a breeze! And the best of all, the Virtual SCSI Latency chart has been updated to show us the IO latency that resulted from a SPBM based IOPS limit! This would’ve saved me a lot of work while troubleshooting vSAN environments. It’s going to look like something as below:

vSAN File Services enhancements

During the previous release we gained the ability to use vSAN to create file shares and distribute them to our VMs in the environment. The vSAN File Services gained some enhancements that I think are most welcome to to product. In the previous version you could only create NFS shares with NFS v3 and NFSv4.1.

In this release we can now use SMB v2.1 and v3 together with Active Directory integration to allow for easy access rights and management. And it’s now possible to use Kerberos in conjunction with NFS. And the scaling restriction from the previous release is now a lot better. In the previous release the vDFS fileshare system got auto-scaled to 8 hosts per cluster, it’s now auto-scaled to 32 hosts in the cluster. This will help increase the reliability of the service.

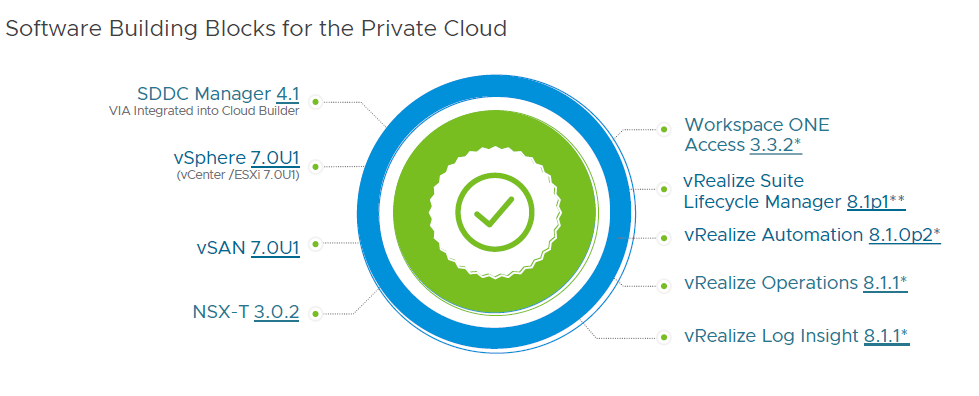

VMware Cloud Foundation (VCF) 4.1

The next installment of VMware Cloud Foundation (VCF) version 4.1 ofcourse brings us a new BOM with the following supported versions:

- SDDC Manager 4.1 which includes a building VMware Imaging Appliance (VIA) into Cloud Builder

- vSphere 7.0 U1 with vCenter and ESXi 7.0 U1

- vSAN 7.0 U1

- NSX-T 3.0.2

- Workspace One Access 3.3.2

- The vRealize 8.1 suit including vRA 8.1p1, vROPS 8.1.0p2, vRLI 8.1.1 and vRSLCM 8.1.1

VCF Remote Cluster Deployment

Another big new addition to the product is a deployment model called VCF Remote Clusters. Currently in VCF you can choose between three deployment models, which are a single VCF Site Deployment, VCF Stretched Cluster for Worload Domains Deployment and the VCF Multi-instance Management Deployment with SDDC Federation. The latest addition called VCF Remote Clusters will allow you to add, you guessed it, remote compute clusters with dedicated vCenters to the SDDC Manager as a workload domain. You can have a 1:1 relationship with a vCenter and a compute cluster or a 1:x relationship. The following figure explains this:

Once you’ve added the remote cluster to the SDDC manager it will be fully manageble through SDDC Manager including lifecycle management. You will have to work with some constraints. You will have to have at least 3 hosts in the cluster, with a maximum of 4, and you are going to need at least 10Mbps bandwith with a maximum of 50ms latency. The last restriction is that Remote Clusters will only be supported on VCF 3.9 and later. I think this is a welcome addition and adds a nice new level of flexibility to the product which helps expand its reach!

Scoping ESXi and NSX-T upgrades

In this release VMware added new functionality in regards to the lifecycle management part. It’s now possible to scope ESXi cluster and NSX-T upgrades. In previous VCF version you need to patch the complete workload domain in one (large) maintenance window. This is cumbersome and takes too much time overal. So to mitigate this VMware added the possibility to scope ESXi updates on a cluster level basis.

This essentially means you can select the clusters wou want to update, or not, or even patch clusters in parallel which greatly reduces the maintenance window. This in return means it is now supported, although I am guessing this will be on a temporary basis, to run different ESXi versions in a workload domain. You can now also enable Quickboot to accelerate reboot times and shorten the maintenance window even further.

Not only is this possible on the ESXi updates, you can now also use this mechanism to scope the NSX updates within a workload domain. You now have the ability to patch hosts, edge and NSX managers independently from each other.

vVols 2.0 Principal Storage Option

Starting with VCF 3.9 you are able to use FC to provide a VMFS volume as principle storage during the deployment of the environment. Starting with version 4.1 we’ve now gained the support for vVols 2.0 as principle storage option for the workload domain. The community has been asking for this forever now and VMware finally decided it was time to implement this.

Using vVols as principle storage is also almost completely integrated within the SDDC Manager UI now. You can see space occupation, add VASA Provider details to the SDDC Manager, Create Workload Domains with vVols as Principal Storage and automatically have the vVols datastore created on the workload domain. The only things you still need to do outside of the SDDC Manager is preparing the hosts, configuring the VASA provider on the storage array and using SPBM policies in vCenter.

I think I covered the most important items that we got in this release. Looks like this post became a very long one again. I hope everybody had fun reading it. Come visit the blog back in the future for more blogposts!

1 Comment

Mike Anderson · April 17, 2026 at 5:12 pm

The added flexibility with Tanzu on existing environments is a big win. It removes a lot of barriers for adopting modern workloads.