Recently we’ve had some weird issues on one of our customers vCenter Servers. For starters the vMotion and Storage vMotion features weren’t working anymore because of time-outs. Which is weird and something I’ve never seen before. So we started troubleshooting the VCSA server and noticed that it couldn’t retrieve the installed licenses (VMware vSphere Enterprise Plus) from the production ESXi hosts anymore.

Going to the “Licensed Features” tab in the vSphere Client (VCSA version 6.0 GA) usually gives you a nice overview of what vSphere license is installed, but this time it was just empty. Going to the ESXi host directly you could however see that the license was present and activated. We also noticed that the License module in the vSphere client was also providing us with a timeout.

Once we dove into the log files from the license service in “/var/log/vmware/cis-license/license.log” we noticed some Security Token Service STS service, SSO service and web-client service issues in regards to certificates. Which got me thinking and looking at the certificates for this vCenter Server Appliance. Below you can find some snippets of logs which might be interesting for you to match your problem to the one I was having:

license.log:

2019-05-13T13:49:10.674Z Timer-3 WARN core.management.maint.service.AssetInventoryMaintainerTimerTaskImpl Maintanance of the asset inventory failed.

com.vmware.cis.license.server.common.provider.ClientStubProviderException: com.vmware.vim.vmomi.client.exception.SslException: com.vmware.vim.vmomi.core.exception.CertificateValidationException: Server certificate chain not verified

Caused by: javax.net.ssl.SSLPeerUnverifiedException: peer not authenticated

at sun.security.ssl.SSLSessionImpl.getPeerCertificates(Unknown Source)

at com.vmware.vim.vmomi.client.http.impl.ThumbprintTrustManager$HostnameVerifier.verify(ThumbprintTrustManager.java:296)

... 44 more

2019-05-13T13:22:43.443Z pool-3-thread-1 WARN common.vmomi.authn.impl.SsoAuthenticatorImpl STS signing certificates are missing or empty

2019-05-13T13:22:43.601Z pool-3-thread-1 ERROR server.common.sso.impl.SsoAdminProviderImpl Refetch STS certificates failedYou can use the following cli cmdlets to check your certificate stores and the certificates that are in them:

/usr/lib/vmware-vmafd/bin/vecs-cli entry list --store MACHINE_SSL_CERT --text | less

/usr/lib/vmware-vmafd/bin/vecs-cli entry list --store machine --text | less

/usr/lib/vmware-vmafd/bin/vecs-cli entry list --store vpxd --text | less

/usr/lib/vmware-vmafd/bin/vecs-cli entry list --store vsphere-webclient --text | less

All certificates checked out but guess what, the “MACHINE_SSL_CERT” didn’t. Turns out it was expired. Funny thing though is that this particular vCenter Appliance should’nt even be working anymore because once the certificate is expired, most of the time it won’t even start all of the vCenter services once you reboot it. In our case somehow it did.

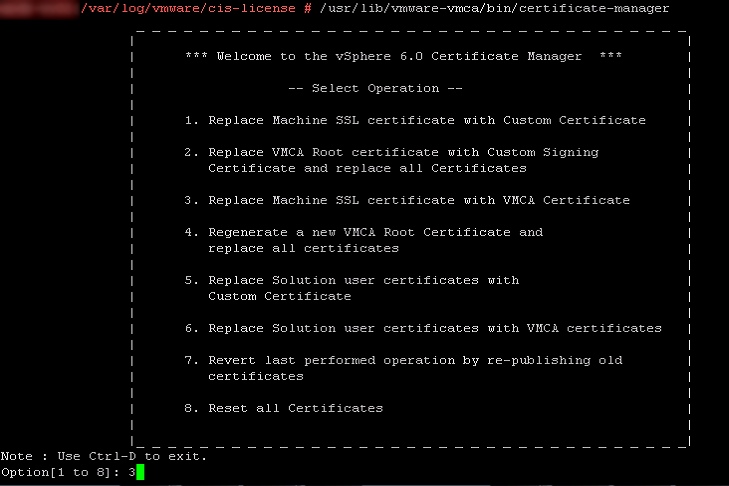

So we went ahead and fired up the “certificate-manager” tool which can be found in “/usr/lib/vmware-vmca/bin/certificate-manager”, picked option 3 to replace the the Machine SSL with a VMCA certificate (which is a self-signed certificate but that’s fine for this environment), entered the information which was present in the current certificate such as hostnames and IP-address information and accepted all changes.

Once you accepted the change it is proposing it will update the certificates in the locations it is needed and stop and start all services. Piece of cake. Our certificate-manager however decided it was time to throw an error:

ERROR certificate-manager Error while starting services, please see log for more details

certificate-manager Error while replacing Machine SSL Cert, please see /var/log/vmware/vmcad/certificate-manager.log for more information.Once we checked that log we saw that the certificate-manager tooling couldn’t start the “vmware-eam” service, see the below log snippet which can be found in “/var/log/vmware/vmcad/certificate-manager.log”:

Waiting for VMware ESX Agent Manager.......

WARNING: VMware ESX Agent Manager may have failed to start.

Last login: Mon May 13 13:22:44 UTC 2019 on console

Stderr =

2019-05-13T13:47:40.139Z {

"resolution": null,

"detail": [

{

"args": [

"Command: ['/sbin/service', u'vmware-eam', 'start']\nStderr: "

],

"id": "install.ciscommon.command.errinvoke",

"localized": "An error occurred while invoking external command : 'Command: ['/sbin/service', u'vmware-eam', 'start']\nStderr: '",

"translatable": "An error occurred while invoking external command : '%(0)s'"

}

],

"componentKey": null,

"problemId": null

}

ERROR:root:Unable to start service vmware-eam, Exception: {

"resolution": null,

"detail": [

{

"args": [

"vmware-eam"

],

"id": "install.ciscommon.service.failstart",

"localized": "An error occurred while starting service 'vmware-eam'",

"translatable": "An error occurred while starting service '%(0)s'"

}

],

"componentKey": null,

"problemId": null

}

Sure enough we were hitting a bug in our vCenter Server Appliance. This bug prevented the EAM service from starting after a vCenter reboot. This bug basically deletes the “eam.properties” file in the “/etc/vmware-eam/” directory. This file is crucial for the service to start and know what to do. Since this file was missing in our environment, the “vmware-eam” service was broken. This VMware KB explains how to fix this. Which basically means that you have to download the attachment called “Recreate_eam.properties.sh” and run it. This script recreates the eam.properties file so that your “vmware-eam” service can start again. Please not that you can only run this when you run the EAM service on the vCenter Server you are working on. The steps to run this script are described below:

Step 1:

Download the script and upload it to your vCenter Server

Step 2:

Create a backup from the current eam.properties file (if present). Don't forget to create a VM snapshot either

Step 3: Determine the host ID:

cat /etc/vmware/install-defaults/sca.hostid

Step 4: Determine the vCenter Server appliance hostname

hostname -f

Step 5: Set permissions on the Recreate_eam.properties.sh file

chmod 777 Recreate_eam.properties.sh

Step 6: Run the Recreate_eam.properties.sh file:

./Recreate_eam.properties.sh and enter the required information

Step 7: Check the /eam.properties for the correct hostname and host ID which you collected earlier

Step 8: Start the "vmware-eam" service.

service-control vmware-eam start

Step 9: re-run the certificate manager with your previously entered information

/usr/lib/vmware-vmca/bin/certificate-manager and select Option 3In our situation this almost fixed our issues. We were forced to break the certificate-manager procedure in the middle where it starts starting the services again after it updated the “MACHINE_SSL_CERT” in the places it has to. You can do this by just pressing CTRL+C on the right time in the procedure. To find this correct time you can open another putty session to the VMware vCenter server and using the following command:

tail -f /var/log/vmware/vmcad/certificate-manager.logJust press CTRL+C when the following log entries pass by:

2019-05-13T14:15:06.607Z INFO certificate-manager Running command :- service-control --stop --ignore --all

2019-05-13T14:15:06.608Z INFO certificate-manager please see service-control.log for service status

INFO:root:Service: vmware-psc-client, Action: stop

INFO:root:Service: vmware-syslog-health, Action: stop

INFO:root:Service: vmware-vsan-health, Action: stop

INFO:root:Service: applmgmt, Action: stop

INFO:root:Service: vmware-eam, Action: stop

INFO:root:Service: vmware-mbcs, Action: stop

INFO:root:Service: vmware-netdumper, Action: stop

INFO:root:Service: vmware-perfcharts, Action: stop

INFO:root:Service: vmware-rbd-watchdog, Action: stop

INFO:root:Service: vmware-sps, Action: stop

INFO:root:Service: vmware-vapi-endpoint, Action: stop

INFO:root:Service: vmware-vdcs, Action: stop

INFO:root:Service: vmware-vpx-workflow, Action: stop

INFO:root:Service: vmware-vsm, Action: stop

INFO:root:Service: vsphere-client, Action: stop

INFO:root:Service: vmware-vpxd, Action: stop

INFO:root:Service: vmware-cis-license, Action: stop

INFO:root:Service: vmware-invsvc, Action: stop

INFO:root:Service: vmware-vpostgres, Action: stop

INFO:root:Service: vmware-syslog, Action: stop

INFO:root:Service: vmware-sca, Action: stop

INFO:root:Service: vmware-vws, Action: stop

INFO:root:Service: vmware-cm, Action: stop

INFO:root:Service: vmware-rhttpproxy, Action: stop

INFO:root:Service: vmware-stsd, Action: stop

INFO:root:Service: vmware-sts-idmd, Action: stop

INFO:root:Service: vmcad, Action: stop

INFO:root:Service: vmdird, Action: stop

INFO:root:Service: vmafdd, Action: stop

2019-05-13T14:15:52.728Z INFO certificate-manager Command executed successfully

2019-05-13T14:15:52.728Z INFO certificate-manager all services stopped successfully.

2019-05-13T14:15:52.728Z INFO certificate-manager None

2019-05-13T14:15:52.729Z INFO certificate-manager Running command :- service-control --start --all

2019-05-13T14:15:52.729Z INFO certificate-manager please see service-control.log for service statusOnce you are at this point just start the services yourself with:

service-control --start --allThis should start all the services nicely. After this point we had our VMware vCenter Server Appliance working again with a new fresh “MACHINE_SSL_CERT” certificate. As a last check you can execute the following command and verify the expiration date:

/usr/lib/vmware-vmafd/bin/vecs-cli entry list --store MACHINE_SSL_CERT --text | lessThere you have it. I figured it would be easy enough and fix this quickly, turned out we were facing a bug in the “vmware-eam” service. I hope this post helps when you are finding the same issues we found.

7 Comments

Howard · June 30, 2020 at 11:34 pm

Thank you very much! I hadn’t thought of CTRL+C to prevent the scripts from reverting the certificate updates when some services won’t start. Great tip! I was able to get the problem resolved before VMWare support even called me back. 🙂

Nath · December 11, 2020 at 5:27 pm

mate -this was sterling advice – thanks man – Happy Christmas!

Erhin Hensel · March 31, 2021 at 9:32 pm

I have to say VMware esx is full of bugs, even in a mature version like esx 6.7 cu3

Autostart doesn’t work (which is very very bad because the vcenter server has to run)

So starting the “photon-machine” manually.

Later my vcenter server did not register with AD. Error message said (decoded) clocks are totally out of sync. So I check up the system protocols… what? installed today but log entries from 2013? The host did have a bad CMOS battery but I did not pay attention. However the vCenter app did survive some reboots. And yes, the app is so damned crude that it hasn’t eve it’s own time sync, in the network configuration is no such a thing to configure a ntp server.

Then I checked the bios of the host. Corrected the date. And then… guess. Vcenter is dead. Because short mided people at VMware did create a self signed certificate with a short expiration time with damned TWO YEARS. Meaning the vcenter server kills itself (in labs without tight certificate management) after 2 years. My browser tells me that the certificate was valid between 7/8/2013 and 7/8/2015.

Roxx · July 25, 2022 at 10:31 am

You saved me today with the CTRL-C trick! Thank you very much <3

Nick · August 30, 2023 at 6:04 pm

VCSA with embedded PSC, skyline is telling me that Health Check is set to AutoStart but is not started and VAMI is showing overall health Gray and unable to get information on updates.

The bug is listed in this KB https://kb.vmware.com/s/article/57222

but is supposed to be fixed in my version which is later than the fixed version shown. Any Ideas?

Bryan van Eeden · September 8, 2023 at 8:20 am

Sometimes the error comes back in versions after the fix. Is the logging telling you the exact same thing as in the mentioned KB, or is logging providing you with different information?

jules · September 19, 2024 at 4:49 pm

Hi Thank you for this, it’s a great job. is it possible to have your email info? would like to pass vcap certification.